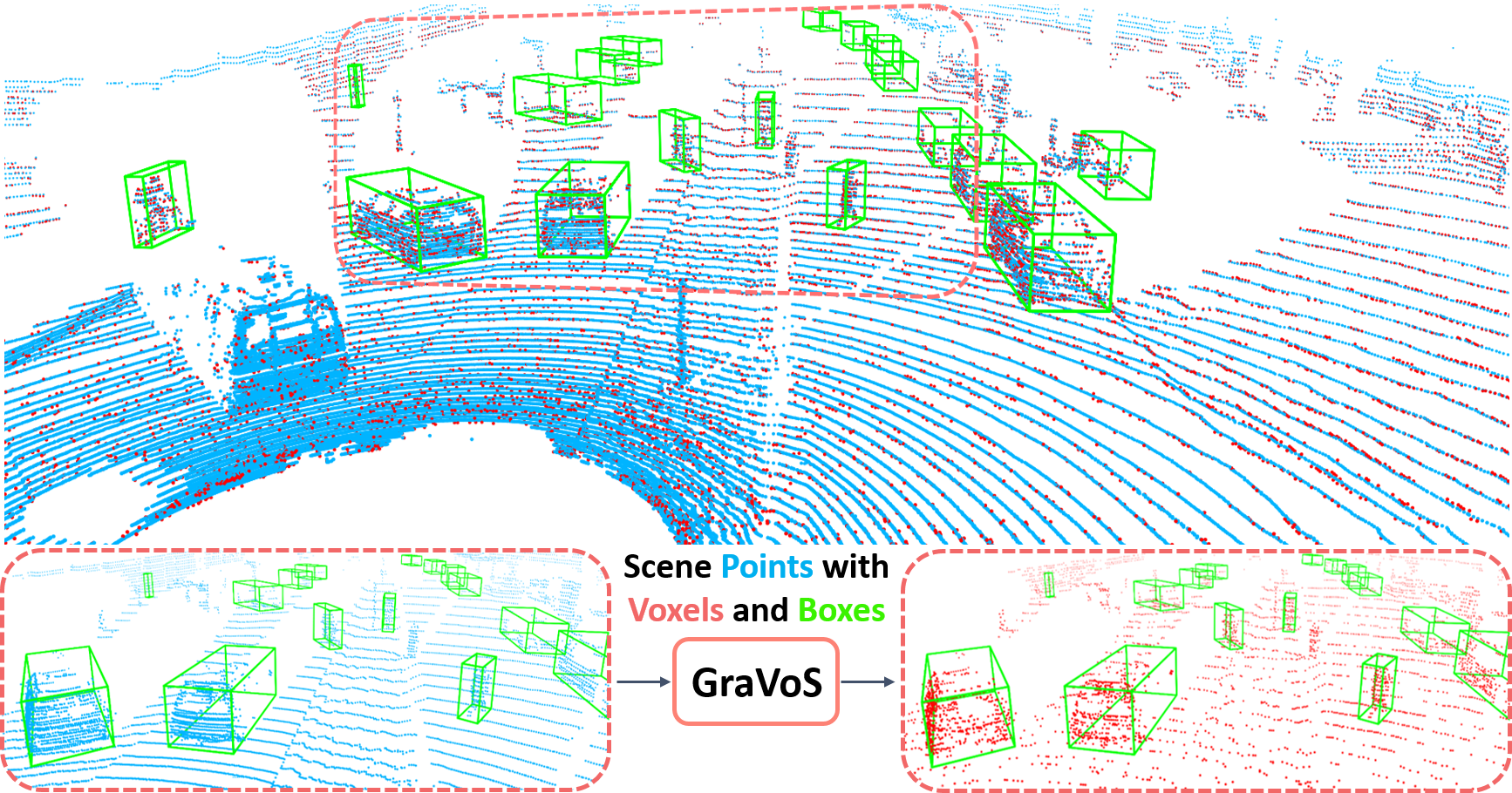

GraVoS for 3D object detection. Given a

3D point cloud

and its associated voxels, we propose a method that selects a subset of network-dependentmeaningful voxels

.3D object detection within large 3D scenes is challenging not only due to the sparse and irregular 3D point clouds, but also due to the extreme foreground-background imbalance in the scene and class imbalance. A common approach is to add ground-truth objects from other scenes. Differently, we propose to modify the scenes by removing elements (voxels), rather than adding ones. Our approach selects the "meaningful" voxels, in a manner that addresses both types dataset imbalance. The approach is general and can be applied to any voxel-based detector, yet the meaningfulness of a voxel is network-dependent. Our voxel selection is shown to improve the performance of several prominent 3D detection methods.

This work was supported by ADRI–Advanced Defense Research Institute - Technion. Yizhak Ben-Shabat has received funding from the European Union’s Horizon 2020 research and innovation programme under the Marie Sklodowska-Curie grant agreement No 893465. We also thank the NVIDIA Academic Hardware Grant Program for providing an A5000 GPU.

@article{shrout2022gravos,

title={GraVoS: Gradient based Voxel Selection for 3D Detection},

author={Shrout, Oren and Ben-Shabat, Yizhak and Tal, Ayellet},

journal={arXiv preprint arXiv:2208.08780},

year={2022}

}